Here's a tension that doesn't get discussed enough in enterprise AI coverage: Google has probably the most comprehensive AI-to-cloud stack of any technology company on the planet, and the most genuinely useful individual tool for O2C practitioners is a free research assistant bundled into a Google Workspace subscription.

That's not a criticism. It's a useful framing. Google's strength in 2026 is breadth — from the 1 million token context window on Gemini 2.5 Flash to a Document AI invoice parser that plugs directly into SAP, from NotebookLM's source-grounded contract Q&A to a $240 billion cloud backlog that signals long-term platform viability. But breadth is not the same as depth in any specific O2C workflow. Understanding where Google is genuinely ready for production and where it's still an architectural possibility requiring custom build is the practical work this post is designed to do.

This is the third post in the vendor deep-dive series. Post 05 covered Claude and Anthropic. Post 06 covered OpenAI. This one covers Google — and specifically what finance operations leaders evaluating Gemini, Vertex AI, Workspace, and NotebookLM should actually know before committing to the platform.

The Model Lineup: What's Available and What Isn't

Google's AI model portfolio as of March 2026 has two production tiers and one important preview caveat that every O2C team needs to understand before architectural planning.

The production tier — Gemini 2.5 — is genuinely ready for enterprise work. Gemini 2.5 Flash and 2.5 Pro have been generally available for months, have stable pricing, and are the models on which the Google enterprise stack is currently built. They are the models you should use today.

Gemini 1.5 models (legacy): Gemini 1.5 Pro (~$7.00/M input, ~$21.00/M output) and 1.5 Flash (~$0.35/$1.05) remain available but are being superseded by the 2.5 family. Google has signaled these models may be deprecated in 2026. If your organization is still on 1.5 contracts, plan migration to 2.5 equivalents before any involuntary end-of-life notice.

The preview tier — Gemini 3 — exists but is not ready for production deployment. Gemini 3 Flash and Gemini 3.1 Pro appeared in preview on Google AI Studio and Vertex AI in early 2026. Sundar Pichai described Gemini 3 as "a major milestone" in Alphabet's Q4 2025 earnings call.[1] Gemini 3.1 Pro shows strong benchmark performance (more on that below). But as of March 2026, neither model is generally available. Do not architect production O2C workflows around Gemini 3.x until Google confirms general availability — preview models can be deprecated, repriced, or changed in behavior before GA.

This distinction matters because a lot of coverage conflates benchmark numbers from Gemini 3.1 Pro with the production capabilities of the platform. When you see Google cited near the top of a finance benchmark, check which model produced the score and whether it's available for your workloads.

Pricing: The 1-Million-Token Advantage

Google's production model pricing is competitive on the dimensions that matter most for O2C work: high-volume document ingestion at reasonable per-token cost, and a context window large enough to hold complete financial documents without chunking.

Here's the current pricing picture with the models most relevant to O2C:

| Model | Input / 1M tokens | Output / 1M tokens | Context Window |

|---|---|---|---|

| Gemini 2.5 Flash-Lite | $0.10 | $0.40 | 1M tokens [2] |

| Gemini 2.5 Flash | $0.30 | $2.50 | 1M tokens [3] |

| Gemini 2.5 Flash (batch) | $0.15 | $1.25 | 1M tokens [4] |

| Gemini 2.5 Pro | $1.25 (≤200K) / $2.50 (>200K) | $10.00 (≤200K) / $15.00 (>200K) | 2M tokens [2][5] |

| Gemini 2.5 Pro (batch) | $0.625 | $5.00 | 2M tokens [4] |

| GPT-4.1 mini (comparison) | $0.40 | $1.60 | 1M tokens [6] |

| Claude Haiku 4.5 (comparison) | $1.00 | $5.00 | 200K tokens [7] |

A few practical observations for O2C teams building on this pricing:

The 1M token context window at $0.30 per million input tokens is Gemini's clearest advantage over competitors at the efficient tier. For input-heavy workflows — remittance extraction, invoice parsing, customer correspondence summarization — where prompt tokens dominate output tokens, you're comparing $0.30 (Gemini 2.5 Flash) to $0.40 (GPT-4.1 mini) to $1.00 (Claude Haiku 4.5). The price differential compounds at volume. For a team processing 5,000 documents per day at roughly 2,000 tokens each, moving from Claude Haiku 4.5 to Gemini 2.5 Flash cuts input token cost by approximately 70% on input-dominated workloads (where prompt tokens significantly outweigh output tokens) — with output cost savings of approximately 50% even for workflows with heavier generation volume — while maintaining a context window large enough for whole-document ingestion without chunking.

Gemini 2.5 Flash's thinking mode doesn't cost extra. Flash supports chain-of-thought reasoning for more complex extraction tasks. Critically, thinking tokens are rolled into the standard output token count rather than billed as a separate line item.[8] This matters for dispute triage and exception handling workflows where you want structured reasoning without a separate model tier or pricing structure to manage.

Vertex AI's 50% batch discount is real and underused. Any workflow that doesn't require real-time response — overnight remittance runs, end-of-day collections queue processing, bulk contract review — qualifies for Vertex AI Batch API pricing. Gemini 2.5 Flash batch drops to $0.15 input / $1.25 output per million tokens.[4] That is the lowest-cost high-context option from a major provider at this capability level. Finance teams doing consistent overnight batch jobs should be calculating the annual savings of moving eligible workloads to batch before committing to real-time API architecture.

Gemini 2.5 Pro context caching reduces costs for repeated document ingestion. If your workflow re-processes the same master agreement or customer file across multiple AI calls, Pro's context caching is priced at $0.125 per million cached input tokens — a significant discount from the standard $1.25 for repeated document material.[5] For contract review workflows hitting the same base document repeatedly, this is worth modeling.

Google Workspace AI: What's Live for Finance Teams Today

In January 2025, Google eliminated the separate Gemini add-on SKU and bundled AI features directly into Business Standard and higher Workspace tiers. The old per-seat add-ons were discontinued.[9] For any organization already on Business Standard (approximately $14–17/user/month), Gemini AI is included — it is no longer a separate budget line. That's a meaningful shift in the ROI calculation for teams that had been deferring AI adoption because of add-on cost.

What is generally available in Workspace as of March 2026:

Gmail offers a Gemini side panel for email summarization, drafting, and contextual Q&A — useful for collecting teams reviewing customer dispute correspondence histories before outreach.[10] Docs embeds Gemini at the bottom of the document with drafting, tone matching, and format replication from reference documents.[11] Sheets supports AI-driven spreadsheet generation, formula creation, and table population from multi-source data via single prompt.[12] Meet provides AI note-taking, meeting summaries, and action item extraction. Workspace Studio — available on Business Standard and above — allows multi-step AI automation chains: auto-labeling workflows, pre-meeting briefings, and repetitive document tasks.[9]

What's important to understand: Google announced a new batch of Gemini features for Docs, Sheets, Slides, and Drive on March 10, 2026 — and most of that coverage focused on these new features as if they were available today. They are in beta, English-only, and initially available only to AI Ultra and Pro subscribers, not standard Business Standard plans immediately.[11][13] Finance teams seeing headlines about the March 2026 Workspace updates should treat those as near-future capabilities, not something to incorporate into immediate workflow planning.

For O2C-specific use in Sheets, Gemini can assist with DSO calculation templates, aging bucket formula generation, and organizing customer payment data pulled from Gmail threads. What is not documented in primary sources: Google has not published AR-specific templates, dispute workflow automation, or cash application-specific functionality within Sheets. These use cases require custom prompt engineering or Workspace Studio automation chains — not out-of-the-box O2C functionality. The honest distinction between "Gemini can help build this" and "Gemini ships this pre-built" matters when scoping implementation time.

NotebookLM: The Practical O2C Knowledge Layer

NotebookLM deserves its own section because it is, practically speaking, the most accessible and immediately usable Google AI product for O2C practitioners who are not developers.

The core architecture is different from a general-purpose chatbot: NotebookLM answers questions exclusively from documents you upload, and every answer is cited back to the exact source passage. The model cannot hallucinate beyond your uploaded corpus.[14][15] That constraint — which might sound limiting — is actually the feature that makes it appropriate for compliance-sensitive O2C workflows. When a collector asks "what are the dispute escalation terms for this customer's contract," they get a cited answer with the clause highlighted, not a plausible-sounding AI fabrication.

Current capabilities include: ingesting up to 300 sources per notebook — PDFs, Google Docs, Slides, YouTube, websites, Drive files; generating Audio Overviews (podcast-style summaries of complex documents); creating FAQs, briefing docs, and study guides from source material; shared team notebooks with usage analytics for managers.[14][16]

Enterprise availability: NotebookLM moved to general enterprise availability in December 2024. The tiers relevant to business users are NotebookLM (standard, included in Business Starter and above for basic use), NotebookLM Plus (included in Business Standard and above — 5x capacity, team sharing, VPC Service Controls compliance, audit trails, admin controls), and NotebookLM Enterprise (available standalone at $9/user/month, or included in Gemini Enterprise at $25/user/month).[17][18]

Google's data policy is explicit on the question that matters most: uploaded Workspace user data is not used to train Gemini models.[15][19] NotebookLM Plus adds IAM role-based sharing, full audit trails, and VPC Service Controls for organizations requiring data isolation. This is significant for teams working with customer credit information, master agreements, and AR dispute records — all of which require careful handling under standard data governance policies.

Where NotebookLM fits in O2C workflows:

Contract review is the clearest application. Ingest master service agreements, payment terms schedules, and discount structures. A collector can query natural-language questions about net terms, dispute clauses, escalation paths, and penalty provisions — and every answer is cited to the exact contract clause. This eliminates the "searching a 200-page PDF" problem without requiring a custom AI build.

Collections policy documentation is the second strong use case. Upload AR runbooks, credit policy, dispute handling SOPs, and escalation matrices. New collectors get cited answers from your actual runbooks rather than generalized AI responses or having to ask a manager. Team notebooks with usage analytics let managers see which policies are being queried most — useful for identifying where documentation needs improvement.

Credit review support is a logical extension. Upload customer 10-Ks, Dun & Bradstreet reports, credit committee memos, and aging summaries. A credit analyst can ask "what were this customer's liquidity ratios in the last three annual reports" across the full uploaded corpus and get a cited synthesis.

One important flag: No independently verified examples of finance or O2C teams specifically deploying NotebookLM for AR workflows appeared in public sources as of March 2026.[20] The use cases above are logical extrapolations from documented capabilities and Google's own positioning. The Berenberg case study (discussed below) mentions NotebookLM adoption for document summarization in investment research, but not specifically for O2C. These are real capabilities for real tasks — but they are practitioner-inferred applications, not confirmed production deployments by named O2C teams.

For Business Standard subscribers who already have NotebookLM Plus included, the cost to experiment is zero. Start there.

Vertex AI for Enterprise O2C: Document AI, Agent Builder, and the SAP Integration Story

Vertex AI is Google's enterprise AI platform — the technical layer where O2C teams build custom workflows, automate document processing, and connect AI to ERP systems. Understanding what's production-ready vs. what requires custom engineering is essential.

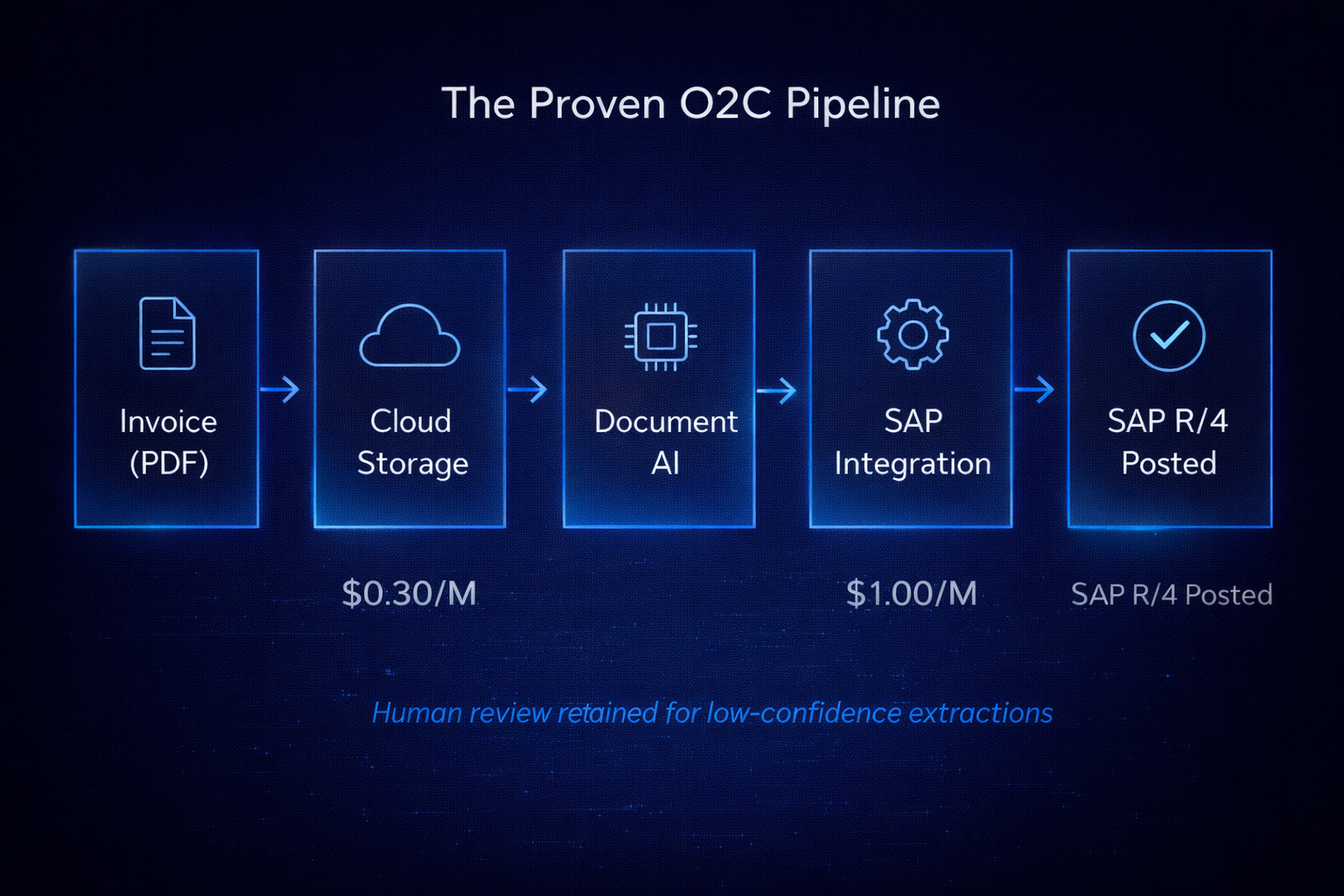

Document AI's Invoice Parser is the most documented and production-validated Google AI product for O2C. It extracts vendor and supplier details, line items, totals, payment terms, tax fields, and PO references from PDF and scanned invoices with per-field confidence scores.[21] Invoices can be uploaded to Cloud Storage, automatically trigger Document AI processing via Cloud Functions, and pass structured JSON output to SAP Cloud Integration for business rule validation and ERP posting — without manual handoff between steps.[21][22]

The FibroGen case study (discussed in the next section) provides the only Google-published production example of this full architecture with quantified results: a two-person AP team at a biopharmaceutical company processing ~1,000 invoices per month, previously spending approximately 500 hours per person per year on manual PDF invoice entry into SAP. After Document AI deployment with Deloitte as implementation partner: 40x ROI (Google-stated), significant reduction in manual data entry time, and AP headcount redeployed to exception management and vendor relationship work.[22]

Vertex AI Agent Builder is Google's platform for constructing, orchestrating, and deploying AI agents connected to enterprise data. For O2C, the capabilities include 100+ pre-built connectors to enterprise systems (ERP, procurement, HR platforms) managed via Apigee and Application Integration; Retrieval-Augmented Generation via Vertex AI Search; Model Context Protocol (MCP) support; and orchestrated multi-step workflows that reuse existing Application Integration logic.[23]

For O2C workflows specifically, Agent Builder can support: invoice intake agents (file upload → Document AI extraction → ERP validation → SAP posting), collections triage agents (pulling aging data from BigQuery, applying credit rule logic, generating personalized outreach), and dispute intake agents (classifying dispute type from customer email, routing to the appropriate team, logging to CRM).

These are architectural possibilities requiring custom build, not out-of-the-box solutions available on Day 1. The distinction is real: Google provides the platform, connectors, and orchestration layer, but an implementation team — internal engineers or a partner like Deloitte or Accenture — still needs to build the specific workflow logic, data validation rules, and exception handling for your environment.

Gemini Enterprise (previously Google Agentspace, rebranded October 2025) is Google's unified platform for enterprise knowledge workers to discover, build, and run AI agents. It launched in early access in December 2024 and was described as Google's "fastest-growing enterprise product ever" at Cloud Next April 2025 with hundreds of thousands of user licenses sold before general availability.[24][25] Relevant for O2C: no-code Agent Designer for building custom agents without developer involvement; pre-built agents including Deep Research, NotebookLM Enterprise, and Coding Agent; unified search across Google Workspace, Microsoft 365, Salesforce, Jira, Confluence, and SharePoint.[26] Pricing starts at $21/user/month for Business edition, $30/user/month for Standard/Plus.[27]

ERP integration status matters for practical deployment planning:

| Integration | Status (March 2026) |

|---|---|

| SAP S/4HANA via Cortex Framework / BigQuery | GA; zero-copy data fabric with SAP BDC announced October 2025 [28][29] |

| Vertex AI in SAP Generative AI Hub | GA; announced SAP Sapphire 2025 [30] |

| Oracle Sales Cloud connector | GA since May 2025 [31] |

| Salesforce connector (Application Integration) | GA; OAuth 2.0 security update September 2025 [31] |

| Microsoft Dynamics | ⚠️ Could not confirm GA — not found in Google's connector release notes as of March 2026 |

The SAP story is the strongest. The October 2025 zero-copy data fabric announcement between Google and SAP — live SAP data accessible via BigQuery without duplication — is a genuine architectural advancement for SAP-centric finance teams.[28][29] If your O2C runs on SAP S/4HANA, Google has the deepest and most recently updated ERP integration of the three major AI providers. For Microsoft Dynamics environments, this is a gap worth probing directly with Google's enterprise team before committing to Vertex AI as your O2C platform.

Enterprise Knowledge Graph is worth a brief mention for teams dealing with customer master data quality issues. The service performs entity reconciliation across BigQuery tables — resolving duplicate or variant representations of the same customer entity at scale.[32] "Acme Corp," "ACME Corporation," and "Acme Corp. Ltd." resolved as a single entity. At high volume, this directly applies to linking payment remittances to the correct customer accounts when names and formats vary across systems. It handles graphs up to billions of nodes using Google-scale clustering algorithms.

Enterprise Security: Where Google Has a Measurable Edge

Finance teams in regulated industries — payment processors, banks, federal contractors, any organization subject to PCI DSS — should spend time on this section because the compliance certification comparison is one area where Google's lead over the other major AI providers is documented and significant.

Google Cloud's certification portfolio as of March 2026:

- SOC 1, SOC 2, SOC 3 — attested for Vertex AI Search Standard and Enterprise, RAG APIs[33]

- ISO 27001, 27017, 27018, 27701 (privacy management) — certified[34][33]

- FedRAMP High P-ATO — maintained for Google Cloud and Workspace[34]

- PCI DSS — certified[35][34]

- HIPAA BAA — available for Vertex AI Search, RAG APIs, and Google Workspace[33][35]

- HITRUST CSF — certified[36]

Compared to the other major providers:

| Certification | Google Vertex AI | OpenAI Enterprise | Anthropic Claude |

|---|---|---|---|

| SOC 2 Type II | ✅ | ✅ | ✅ |

| ISO 27001 | ✅ | ✅ | ⚠️ Pending as of Mar 2026 [37] |

| HIPAA BAA | ✅ | ✅ | ✅ |

| FedRAMP High P-ATO | ✅ | ⚠️ In progress [37] | ❌ Not available |

| PCI DSS | ✅ | ❌ Not confirmed | ❌ Not confirmed |

| ISO 27701 (Privacy) | ✅ | ❌ Not confirmed | ❌ Not confirmed |

| HITRUST CSF | ✅ | ❌ | ❌ |

| Customer data not used for training | ✅ (paid tiers) | ✅ (Enterprise) | ✅ |

A third-party enterprise AI platform comparison published in March 2026 concluded that "Google demonstrates enterprise-grade maturity with advanced automation, zero-trust architecture, and extensive certifications."[37] For finance teams where FedRAMP High and PCI DSS are hard requirements — not nice-to-haves — Google's platform is the only major AI provider that currently satisfies both.

One important caveat: The compliance table for Gemini Enterprise specifically notes that Standard and Plus editions are "maintained through a structured internal process" and "will be included in future certification audits."[35] As of March 2026, the formal SOC 2 and ISO audit cycle has not yet been completed for Gemini Enterprise Standard/Plus specifically. Finance teams should request Google's current audit attestation reports (available via the Google Cloud compliance portal) before committing regulated workloads to those specific tiers.

The data training policy distinction is operationally critical. Google's tiered approach means finance teams need to confirm which product they are actually deploying:

Consumer Gemini apps (gemini.google.com) and Gemini Enterprise Starter use customer data for model training unless opted out. Gemini Enterprise Business/Standard/Plus, Workspace Business Standard and above, and all paid Vertex AI editions do not use your data to train Google models.[19][38][35] Google's Gemini Enterprise FAQ states directly: "Your data — including prompts, outputs, and training — aren't used to train Google models or models for any other customer."

If your organization is deploying any Google AI product, verify you are on a Business Standard or higher Workspace plan, or a paid Vertex AI agreement — not the consumer or Starter products. The distinction matters for every piece of confidential AR data, customer financial information, or remittance detail that flows through the system.

Data residency: Vertex AI customers can select regional data storage and inference processing across North America (US, Canada), Europe (Netherlands, France, UK, Germany, Belgium), and Asia (Japan, Singapore, South Korea). Google extended ML processing residency guarantees — not just storage — to Canada in September 2024.[39] EU and UK data residency supports GDPR Article 44+ requirements. Customer-Managed Encryption Keys (CMEK) are supported across Vertex AI for key sovereignty.[38]

The Market Reality: 120,000 Enterprises, $240 Billion Backlog

A few market figures worth anchoring on when evaluating Google as a long-term platform bet for O2C infrastructure:

Alphabet's Q4 2025 earnings (reported February 4, 2026) showed Google Cloud revenue of $17.7 billion, up 48% year over year — the fastest growth rate among the major hyperscalers that quarter, edging out Microsoft Azure's approximately 39% growth.[1] The company exited 2025 with an annual Cloud revenue run rate above $70 billion. The Cloud backlog more than doubled year over year to $240 billion, driven by enterprise AI demand.[1]

On the adoption side: approximately 75% of Google Cloud customers are now using Google's AI products; 120,000+ enterprises are using Gemini; 8 million+ paid seats of Gemini Enterprise were sold to 2,800+ companies within four months of commercial launch; and 95% of the top 20 global SaaS companies use Gemini.[40][41] Gemini usage among Google Cloud customers grew 35x year-over-year.[41]

These numbers are all from Google's own investor communications and press releases — not independently audited. They should be treated as directional signals rather than independently verified facts. But the direction is clear: Google Cloud is growing faster than its peers, and the enterprise AI backlog suggests that growth is durable.

For O2C leaders making multi-year platform commitments, platform viability matters as much as current feature set. A $240 billion backlog and 48% revenue growth signals a platform that will be investing aggressively in enterprise AI capabilities for the foreseeable future.

What the Case Studies Actually Show

Three verified, named enterprise case studies were found using Google AI for finance or finance-adjacent workflows. All three are vendor-produced — Google Cloud Customer Stories or Google blog posts — with no independent third-party verification. This is noted explicitly because a recurring theme in this post series is being clear-eyed about what's proven in production versus what's architecturally plausible.

FibroGen — AP Invoice Automation with Document AI

FibroGen, Inc. (NASDAQ: FGEN), a clinical-stage biopharmaceutical company, deployed Google Cloud Document AI (Invoice Parser) plus Cloud Functions plus SAP Cloud Integration to automate approximately 1,000 invoices per month previously handled manually by a two-person AP team. The architecture: invoices arrive via email → automatically uploaded to Cloud Storage → Cloud Function invokes Document AI Invoice Parser → structured JSON forwarded to SAP Cloud Integration → SAP applies business rule validation → posts to SAP R/4, with human review retained for low-confidence extractions. Implementation partner was Deloitte. Google-stated result: 40x ROI, significant reduction in manual data entry time, AP headcount redeployed to exception management.[22]

This is the only Google-published production example of the Document AI → SAP integration with quantified results. The 40x ROI is a Google characterization with no independent audit. The architecture is technically consistent with Document AI's documented capabilities and provides a credible blueprint for O2C teams evaluating this pathway.

Berenberg Bank — Equity Research Automation with Vertex AI and Gemini Enterprise

Berenberg Bank (Germany's oldest private bank, founded 1590, €39 billion AUM, 1,600 employees) deployed a custom BegoChat AI assistant built on Vertex AI for equity research aggregation, then adopted NotebookLM for document summarization, and began a bankwide Gemini Enterprise rollout in Q1 2026. Portfolio managers were spending hours daily reading and synthesizing broker reports, company filings, and news. The system generates daily market briefings ("Morning Mail") with AI assistance. Google-stated results: 85–90% faster content generation through AI-assisted workflows; significantly broader market coverage with the same research teams.[42] Berenberg's own LinkedIn post on March 17, 2026, independently corroborates the Gemini Enterprise rollout scope.[43]

Worth noting: this is a research and content generation use case in investment banking, not direct O2C. It is included because it is the most detailed named financial institution case study with specific quantified results from a primary source, and because the progression from custom Vertex AI build (bespoke, expensive, high-accuracy) to Gemini Enterprise deployment (broader, lower-cost, sufficient accuracy) mirrors the trajectory O2C teams are likely to follow.

Head of AI at Berenberg, Nico Baum, offered a framing worth repeating: "At the end of the day, there has to be a measurable economic impact for the business. You don't get projects through management if the only outcome is that three employees can go home an hour earlier."[42]

Deutsche Bank — AI-Assisted Document Processing

Deutsche Bank trained 6,000+ employees on Vertex AI and Gemini, and deployed AI-assisted document processing workflows across business units with a reported 97% accuracy rate and 40–50% of developer time reclaimed through AI-assisted workflows.[44] This is a very brief mention from a Google analyst communication with no detailed case study or methodology disclosed. The accuracy and productivity figures are single-source vendor claims. Include with explicit qualification or not at all for purposes that require rigorous sourcing.

Honest Limitations: Where Google Falls Short

Benchmark performance on finance-specific tasks trails the leaders. On the Vals AI Finance Agent Benchmark v1.1 (March 2026), Claude Opus 4.6 Thinking ranks first at 60.65%; GPT 5.1 ranks second at 56.55%; Claude Sonnet 4.5 Thinking is third at 55.32%. Gemini 2.5 Pro's Vals Index overall ranking was 30th out of 34 models at 48.82% on that same March 2026 leaderboard.[45]

Separately, a February 2026 Vals AI LinkedIn post — referencing an earlier benchmark run, not the March 2026 v1.1 snapshot — cited Gemini 3.1 Pro Preview as ranking third on the Finance Agent benchmark at that time, placing it "ahead of OpenAI but still behind Anthropic." These are two distinct benchmark snapshots and should not be read as the same leaderboard.[46] Gemini 3.1 Pro in preview may show stronger finance performance than Gemini 2.5 Pro — but it is not GA. The production model you would deploy today scores near the bottom of the finance benchmark leaderboard.

On the FinanceReasoning benchmark (238 hard financial reasoning questions, AIMUltiple, February 2026), Gemini 3.1 Pro Preview scored 86.55% accuracy — slightly below GPT-5 mini's 87.39%, but with fewer tokens, suggesting cost efficiency advantages at comparable preview capability levels.[47]

Independent enterprise testing observations: Gemini is "quickest but shows more variance in output quality" compared to Claude and GPT-4o; on coding tasks, Gemini significantly lags Claude (71.9% vs. 93.7% on some benchmarks); "inconsistent response times and occasional service interruptions at high volume" were reported in enterprise contexts.[48] That last point is a legitimate operational concern for transaction-scale O2C automation where latency directly affects cash application SLAs or customer-facing dispute portal response times.

Several headline features are not yet GA. Gemini 3.1 Pro is preview only. The new March 2026 Workspace AI features in Docs, Sheets, and Drive are in beta, English-only, and initially limited to AI Ultra/Pro subscribers. Workspace Studio's promotional higher usage limits expire March 31, 2026 — an AI Expanded Access add-on will be required starting April 1.[13][49] Gemini Enterprise on Google Distributed Cloud (for on-premises or air-gapped deployments) was announced as "coming soon" at Cloud Next April 2025 with no confirmed GA date as of March 2026.[50]

No out-of-the-box O2C workflow automation exists. Google's platform provides powerful building blocks — Document AI, Agent Builder, Gemini Enterprise Agent Designer, ERP connectors — but all production O2C implementations as of March 2026 require custom build. There is no equivalent of an O2C-specific SaaS product embedded in the Google stack. Teams evaluating Google for O2C should budget for implementation time and either internal engineering resources or a systems integrator partner.

The finance benchmark accuracy ceiling applies here too. The best-performing model on complex financial analyst tasks achieves approximately 60% accuracy. This ceiling applies to all providers, not just Google. Finance teams should design workflows that keep humans in review loops for any high-stakes O2C decisions — credit limit changes, large write-offs, dispute resolutions above a materiality threshold. Current AI — at any provider — is a productivity multiplier, not an autonomous decision-maker for consequential finance actions.[45]

Where to Start in O2C

Given the full picture, here's a practical sequencing for O2C teams evaluating Google AI:

If your organization is on Google Workspace Business Standard or above — start with NotebookLM Plus this week. You already have it. Upload your AR runbooks, collections policy, and one or two master service agreements. Run queries. See what it returns and how the citations work. This is a zero-cost, zero-IT-involvement entry point that delivers immediate practical value for collections and dispute handling teams. The feedback from this experiment will inform whether the broader Google AI stack deserves further investment.

If you are on SAP S/4HANA and evaluating AP or AR invoice automation — start with a Document AI proof of concept. The FibroGen architecture is a documented, GA pathway with an established implementation pattern. Run a 30-day pilot on your lowest-complexity invoice type — single-page standard format, same vendor, consistent layout. Measure extraction accuracy against your human baseline. If it exceeds 90% on structured invoices, you have a business case for broader deployment. The SAP zero-copy data fabric integration (October 2025) makes the ERP side of this significantly simpler than it was 12 months ago.

For Workspace AI in finance operations, the practical entry point is Gemini in Gmail for collections email drafting and Gemini in Sheets for formula generation and data structuring. These are low-risk, already-available capabilities that deliver immediate productivity gains without requiring any technical implementation. Treat them as a warm-up for broader AI workflow thinking, not a destination.

For custom O2C agent development on Vertex AI, involve your enterprise IT or a Google Cloud implementation partner before committing to a build schedule. The capabilities are real, the SAP connectors are GA, and Agent Builder provides real orchestration infrastructure. But "architectural possibility requiring custom build" means months of implementation work, not weeks. Set expectations accordingly.

Do not plan production workflows around Gemini 3.1 Pro until GA is confirmed. The preview benchmarks are attractive — particularly for finance-specific reasoning tasks. But preview means the model can change, and building production dependencies on preview API endpoints introduces operational risk. Wait for Google's GA announcement, then re-evaluate.

The Honest Summary

Google's position in enterprise O2C AI in 2026 is: strongest on infrastructure and security, best-in-class on context window economics, most mature on SAP ERP integration, and genuinely accessible for non-technical practitioners through NotebookLM. It is not the leader on finance-specific benchmark performance with production-ready models, does not offer any pre-built O2C workflow automation, and has some real output quality variance concerns at the transaction scale that high-frequency AR automation requires.

The honest comparison across the big three:

Use Google if: your organization is on SAP and Google Workspace, you need FedRAMP High or PCI DSS compliance, you are building large-context document processing pipelines where the 1M token window and Vertex AI batch pricing change the unit economics, or you want the most comprehensive compliance certification portfolio of any major AI provider.

Use Anthropic if: complex multi-document reasoning and consistency of output matter more than price — contract review, dispute analysis, credit underwriting on complex counterparties, and tasks where Claude's leading finance benchmark performance translates to fewer human review cycles.

Use OpenAI if: you need the broadest ecosystem of integrations and third-party tool support, are already embedded in the Microsoft/Azure stack, or want the most widely deployed enterprise AI platform with the largest library of existing enterprise workflow integrations.

Most large organizations will end up using all three in some form — different vendors for different layers of the O2C workflow. The practical question is which provider serves as your primary infrastructure layer, which you use for specialized high-judgment tasks, and which you access through the embedded AI in your existing ERP or AR automation platform.

Google's combination of infrastructure depth, compliance maturity, SAP partnership, and the 1M-token-at-reasonable-cost position makes it a strong candidate for the primary infrastructure layer, particularly for organizations where cost at volume and regulatory compliance are the dominant selection criteria. For the highest-stakes finance reasoning tasks, the benchmark evidence says the edge still belongs to Anthropic — and that gap is worth understanding clearly when designing where human review remains in the loop.