Everyone is talking about GPT-5. The benchmark headlines, the enterprise announcements, the breathless comparisons to every model that came before it. And GPT-5 is genuinely impressive — it launched August 7, 2025 and crossed capability thresholds that matter for complex document reasoning and financial analysis.[1][2]

But here's what nobody is saying clearly enough for the people actually running Order-to-Cash operations: GPT-5 is probably not the model that will change your daily work. The models that will change your daily work are significantly less expensive, already embedded in the platforms you use, and already good enough to handle the volume tasks that currently eat most of your team's time.

This post is about that distinction — and about what the OpenAI enterprise stack as a whole actually looks like for O2C teams in 2026.

The Mid-Tier Advantage: Why $0.15 Per Million Tokens Changes the Math

The most important thing to understand about AI in O2C right now is the cost-performance curve at the middle of the model tier.

Platforms like HighRadius, Emagia, Gaviti, and Esker are not embedding GPT-5 in their remittance extraction pipelines. They are embedding GPT-4o mini, Gemini Flash, and Haiku-class models — models that cost between $0.15 and $1.00 per million input tokens — because that is where the economics of production-scale O2C automation actually make sense.[3][4]

Think about what high-volume O2C tasks look like from a tokens perspective. A typical remittance advice email plus the matching invoice data might be 2,000 tokens. If you're processing 500 remittances a day, that's 1 million input tokens per day just for one workflow. At $0.15/M tokens (GPT-4o mini), you're spending $0.15 per day for that workflow. At $1.25/M tokens (GPT-5), the cost is the same 500 remittances but more than eight times the price — for a task that does not require frontier-level reasoning to execute well.

The SSON Future of O2C Market Report (2025) found that AI adoption in O2C grew 14% year-over-year, but cost and integration constraints remain the primary barriers to broader deployment.[5] That is the exact problem the mid-tier model tier is solving in real time. When you can run a collections email drafting workflow at scale for a few dollars a month, the cost barrier disappears.

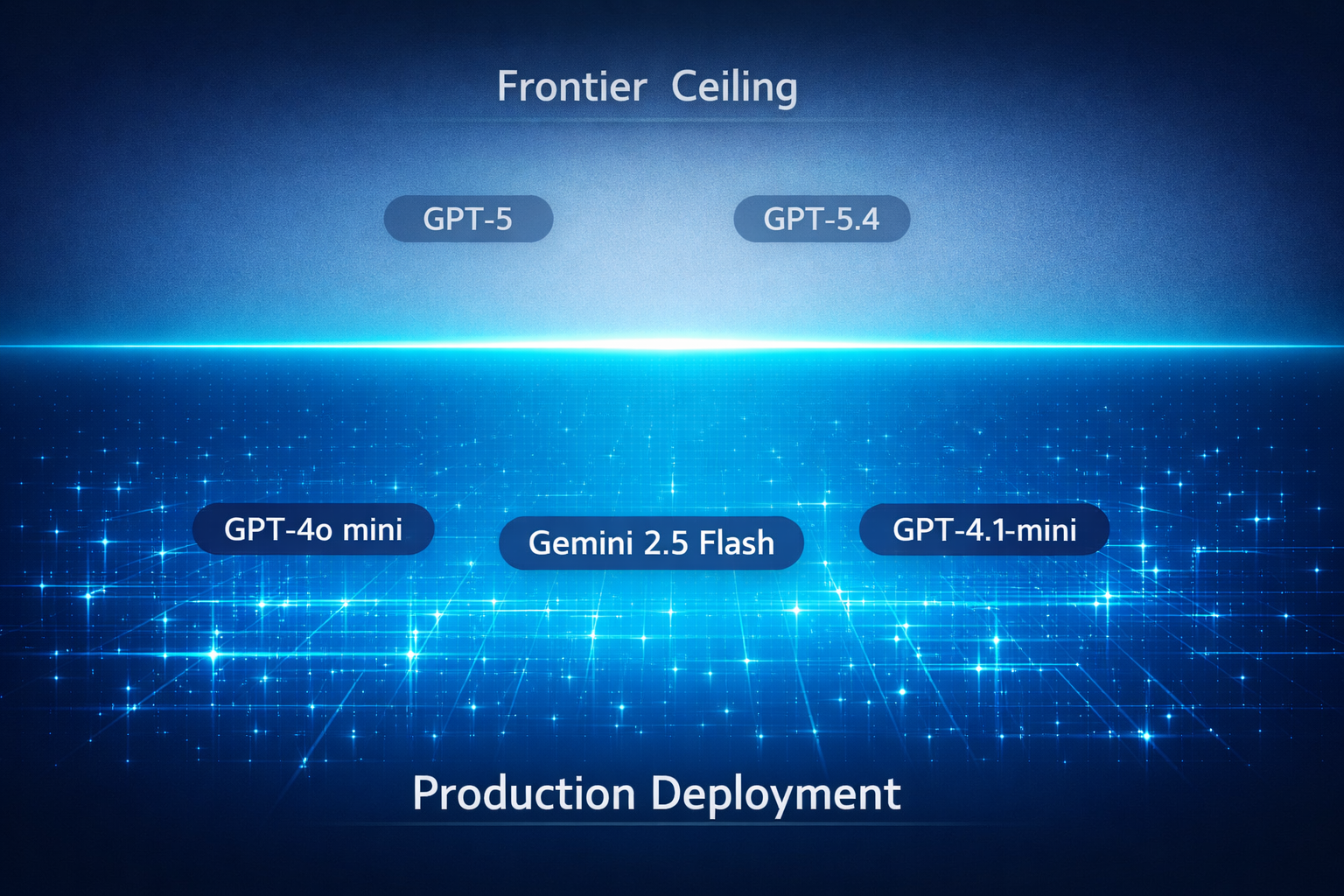

This is the model tier where production O2C AI is actually happening. GPT-5 and GPT-5.4 set the ceiling — they define what's possible and they're worth understanding as the direction the mid-tier will reach in 12–18 months. But the everyday deployment decision for O2C teams in 2026 is almost always: GPT-4o mini or Gemini 2.5 Flash for volume work, with GPT-4.1 or Claude Haiku 4.5 stepping up for judgment-heavy tasks like dispute triage or credit analysis.

The Model Tier — A Practical Reference

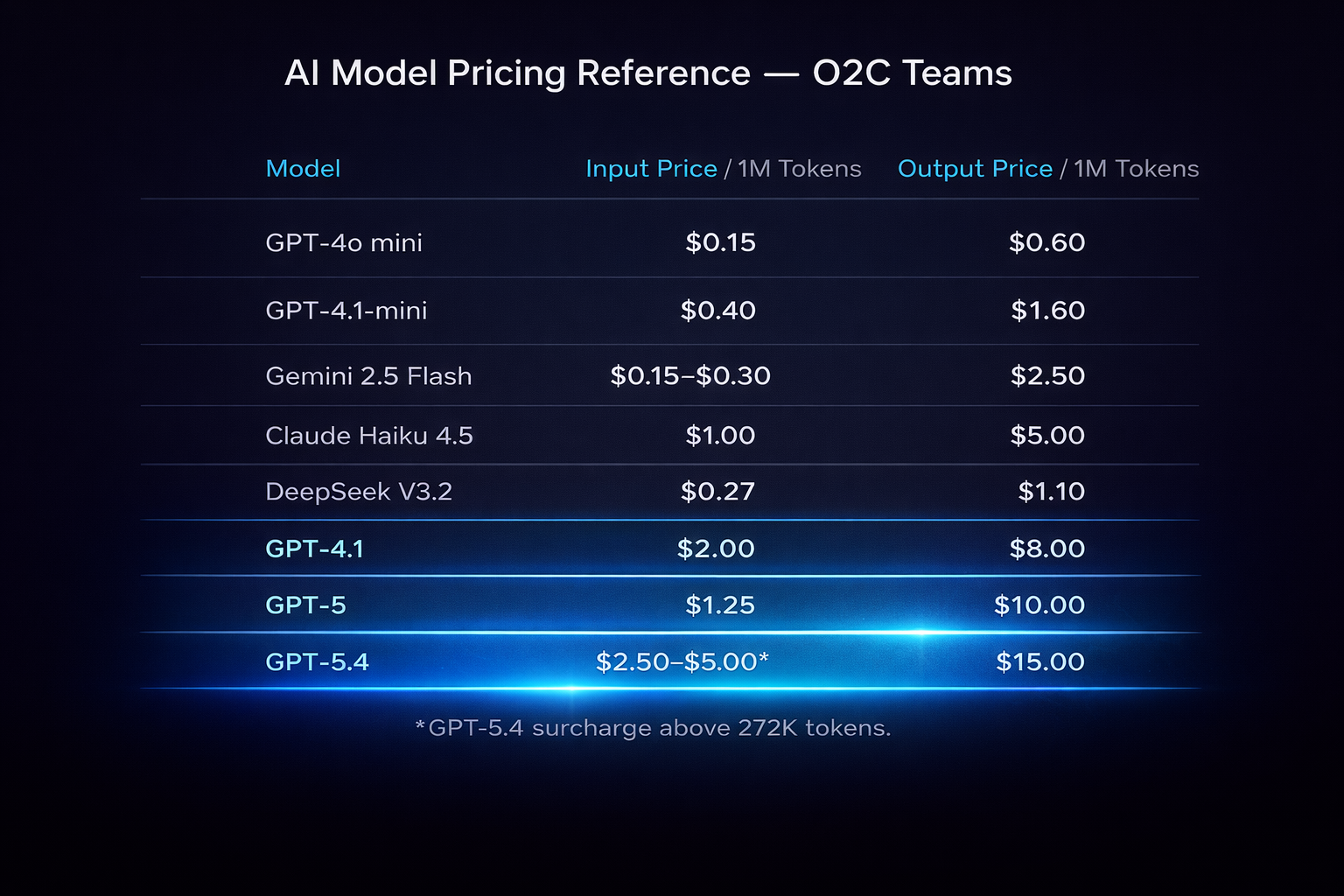

Before going into how these models fit specific O2C tasks, here's the pricing picture with the mid-tier front and center.

| Model | Input / 1M Tokens | Output / 1M Tokens | Best For in O2C |

|---|---|---|---|

| GPT-4o mini | $0.15 | $0.60 | High-volume remittance extraction, collections email drafting, invoice classification |

| GPT-4.1-mini | ~$0.40 | ~$1.60 | Structured document parsing, exception flagging — OpenAI's forward direction from GPT-4o mini |

| Gemini 2.5 Flash | $0.15 (≤200K tokens) / $0.30 (>200K) | $2.50 | Standard O2C batch tasks at the lower tier; large-context ingestion at the higher tier |

| Claude Haiku 4.5 | $1.00 | $5.00 | Near-frontier accuracy for dispute triage and coding tasks |

| DeepSeek V3.2 | ~$0.27 | ~$1.10 | Cost-optimized bulk classification — note: data residency risk for regulated AR environments |

| GPT-4.1 | $2.00 | $8.00 | Judgment-heavy tasks: credit analysis, complex dispute review; 1M token context window |

| GPT-5 | $1.25 | $10.00 | Frontier capability; 400K context; complex multi-document analysis |

| GPT-5.4 | $2.50–$5.00* | $15.00 | Maximum context (1M tokens); full AR ledger + contracts in one prompt — *surcharge kicks in above 272K tokens |

| Batch API | 50% off any model | 50% off any model | Async overnight processing — apply to any bulk O2C run |

Model pricing reference — mid-tier front and center, frontier as the ceiling.

GPT-4.1 replaces GPT-4o; GPT-4.1-mini replaces GPT-4o mini. OpenAI has signaled GPT-4.1 as the successor to GPT-4o and GPT-4.1-mini as the forward direction for the mid-tier API. GPT-4.1 brings a full 1M token context window at $2.00/$8.00 — better context capacity than GPT-4o at a lower per-token price. If your team is building new workflows today, build to GPT-4.1 and GPT-4.1-mini rather than their predecessors.[6]

GPT-5.4 cost surcharge above 272K tokens. The large-context O2C use cases described in this post — full AR ledger history, multiple contract versions, complete dispute files in one prompt — will almost always exceed 272K tokens per call. At that threshold, GPT-5.4 input pricing jumps to the higher tier ($5.00/M). Factor this into cost modeling before committing to GPT-5.4 as the architecture for high-volume large-context workflows. GPT-4.1's 1M context at $2.00/M input may be more cost-effective for most O2C applications.[8]

Gemini 2.5 Flash's tiered pricing matters. Most standard O2C batch tasks fall under 200K tokens per call, which puts you in the $0.15/M tier. If you're ingesting large contracts, full AR aging histories, or multi-document remittance packages in a single call, you'll cross into $0.30/M territory. Plan your architecture accordingly — splitting large documents across calls can keep you in the cheaper tier.[7]

The Batch API discount is underused. Any workflow that does not require a real-time response is a candidate for batch processing — remittance matching, classification runs, collections outreach drafting, DSO reporting. The 50% discount applies across all OpenAI models. For a team running consistent overnight batch jobs, this could cut AI infrastructure costs in half.[8]

What GPT-5 Is Actually Good For in O2C

GPT-5 is not irrelevant to O2C. The framing is about deployment context, not capability.

When you have a task that genuinely requires frontier-level reasoning — complex multi-party contract disputes, credit analysis on a counterparty with complicated corporate structure, or working through a 200-page master services agreement with payment terms embedded in three different appendices — GPT-5 is the right tool. Its 400,000-token context window handles documents that would have required chunking and re-assembly with earlier models.[1][2]

GPT-5.4, the March 2026 update, extends this further: 1 million tokens of context, which means an entire AR ledger history for a major customer, multiple contract versions, dispute correspondence, and a full credit file can all live in a single prompt.[9] For complex credit underwriting decisions, that kind of context completeness changes the quality of the analysis.

Box documented a real-world illustration of what this looks like at the frontier: a task that previously required days of manual document review was completed in minutes using GPT-5.2's large-context reasoning.[10] That's a genuine capability shift — but it's a frontier use case, not a baseline expectation. Most O2C teams will encounter this level of document complexity occasionally, not daily.

The honest framing for GPT-5 in O2C: it's the right call for your hardest, most document-dense, most judgment-intensive tasks. It is not the right economic choice for the 80% of O2C work that is structured, repeatable, and volume-dependent.

Production deployment sits in the mid-tier. Frontier is the ceiling — not the starting point.

The Enterprise Stack: What You're Actually Buying With ChatGPT Enterprise

If your organization is evaluating or has already procured ChatGPT Enterprise, here's what that actually means for O2C teams on the security and compliance side — because this is where the decision usually gets made in finance organizations.

ChatGPT Enterprise holds SOC 2 Type II (audited January–June 2025), ISO 27001, 27017, 27018, and 27701 certifications.[11][12] Encryption is AES-256 at rest and TLS 1.2+ in transit. Enterprise Key Management (EKM) lets customers control their own encryption keys — not just trusting OpenAI's key management, but maintaining organizational control of access at the cryptographic level.[11]

Data residency is available globally. Under Enterprise and API agreements, customer data is never used for model training.[11][12] For O2C teams working with customer credit data, payment terms, banking information, and dispute records — all of which carry sensitivity requirements under standard data handling policies and potentially under specific regulatory frameworks depending on your industry — this stack meets the requirements.

PCI-DSS compliance covers the payment processing components. For organizations in healthcare O2C, HIPAA-eligible configuration options exist.[12]

What this means practically: A team using ChatGPT Enterprise to draft collections correspondence, analyze customer credit files, or summarize dispute history is working within an enterprise security posture that finance and legal teams can sign off on. This is genuinely different from using the consumer ChatGPT interface, where data handling terms and controls are more limited.

At the market level, 92% of Fortune 500 companies now use OpenAI products, and more than 1 million businesses globally have active OpenAI deployments.[13][14] OpenAI crossed $20B in annualized revenue in January 2026 per their own CFO, and Reuters reported that figure crossing $25B annualized by March 2026.[15][16] The vendor risk conversation about OpenAI's long-term viability has largely been settled — this is an enterprise-grade supplier by any reasonable measure.

OpenAI Operator and Agents SDK — Multi-Step O2C Automation Without the Platform Dependency

This is the part of the OpenAI stack that most O2C teams are not paying enough attention to.

OpenAI Operator, launched January 2025, is a Computer-Using Agent: it can navigate web interfaces, fill forms, click through multi-step processes, and execute tasks across UIs autonomously.[17][18] It was updated in May 2025 with an improved underlying model.[19] For O2C teams managing workflows that span multiple systems — say, pulling aging data from your ERP portal, cross-referencing with a customer's payment portal, and logging the result in your collections system — Operator can execute that sequence without building a formal API integration.

The OpenAI Agents SDK is the open-source framework for building custom multi-agent pipelines.[20] The core primitives are straightforward: agents (specialized models with defined roles), handoffs (delegation from one agent to a sub-agent for a specific task), and guardrails (validation rules that run before or after agent actions). For O2C, this framework maps directly to how complex workflows actually work:

- A routing agent receives incoming customer communications and classifies them as remittance advice, dispute notification, or general inquiry

- Handoff to a remittance extraction agent, dispute intake agent, or collections response agent based on classification

- Guardrails validate that extracted payment amounts match invoice totals before writing to your AR system, and flag exceptions for human review

This isn't hypothetical — it's a deployment pattern that O2C-focused AI vendors are already building on. The advantage of understanding the Agents SDK directly is that it gives O2C operations teams the ability to evaluate vendor-built solutions intelligently, and gives technically capable finance teams the tools to build lightweight custom workflows without a full engineering engagement.

The Klarna Benchmark — Understanding What It Actually Proves

Klarna's AI deployment numbers get cited constantly in discussions of AI in finance. For context: in February 2024, Klarna launched an OpenAI-powered customer service assistant that handled 2.3 million conversations in its first month — two-thirds of all inbound volume.[21][22] Resolution time dropped from 11 minutes to 2 minutes (an 82% improvement). Repeat inquiry rates fell 25%. Klarna estimated the system's output as equivalent to 700 full-time agents.[21][22]

These numbers are real, sourced from Klarna's own PR Newswire release, and confirmed by OpenAI's official case study.[21][22]

What they prove for O2C specifically: high-volume, repeatable, inquiry-and-response workflows are exactly what mid-tier AI does well. Collections outreach, dispute intake acknowledgment, payment status inquiries, remittance confirmation — these are structurally similar to what Klarna's assistant was handling. Fast, pattern-driven, high-frequency.

The critical context: Klarna almost certainly deployed GPT-4o or a fine-tuned variant for this — not GPT-5, which launched 18 months later.[1] The performance that generated those numbers was achieved with the generation of model that is now mid-tier in cost and capability. If anything, the benchmark tells you what is achievable with today's affordable models, not what requires frontier spend.

This is the right framing for selling AI investment internally in O2C: the Klarna results were not special. They were repeatable. The underlying workflow design is what drove the outcome, not exclusive access to an expensive model.

What the Microsoft Story Actually Is Right Now

A note on a significant item that has been misreported in some coverage: Microsoft's Dynamics 365 Collections Agent — the Copilot for Finance feature that was described in 2025 Wave 2 release plans as an AI-powered collections automation tool — was cancelled. The feature was deprioritized and removed before launch, with the change reflected in Microsoft's own release wave change history on February 13, 2026.[23]

This matters for two reasons. First, if your team has been anticipating that feature as part of a Dynamics 365 finance roadmap, it is not coming in this cycle. Second, it reflects a broader honest truth about where the Microsoft O2C AI story actually sits: for the most part, it is still a roadmap story for collections automation, not a live deployment story.

What IS available from the Microsoft stack today for O2C teams on Dynamics 365 Finance:

- Copilot in Dynamics 365 Finance — AI-assisted reconciliation, variance analysis, and Outlook integration for customer communications (generally available)

- 2026 Wave 1 Dynamics 365 Finance — collections prioritization enhancements are on the roadmap, but as of this writing these are planned features, not GA[24]

The value of the Microsoft stack for O2C today is Copilot for Finance's existing reconciliation and reporting features, plus the ability to connect GPT-4.1 or GPT-5 via the OpenAI API to build custom workflows on top of Dynamics data. That's a real and useful capability — it just requires more configuration than a native product feature would.

Salesforce + OpenAI — The Integration Worth Knowing, and Its Real Scope

On January 7, 2026, Salesforce launched the Agentforce Sales ChatGPT App (open beta), allowing users to query Salesforce CRM data directly from ChatGPT conversations — SOC-2 compliant, no training on customer data, custom data retention policies.[25] The Spring '26 release then added Agentforce Voice for Financial Services, which includes collections inquiry handling — a more direct O2C fit than the core Sales Cloud integration.[29]

Here's the important scoping detail: the core integration is Sales Cloud-scoped. It surfaces sales pipeline and CRM relationship data. It does not natively connect to AR aging data, collections history, or order management records.

For O2C teams running Salesforce as their AR or collections CRM, getting aging reports, customer payment history, and dispute context into AI workflows still requires building custom Agentforce flows or integrating via the OpenAI API directly against your Salesforce data objects. Agentforce Voice for Financial Services narrows this gap specifically for inbound collections inquiry handling — but a deliberate configuration build is still required for full AR workflow coverage.[25][29]

Don't let this be the version you get sold in a vendor demo. Ask specifically whether the workflow connects to your AR data objects, your collections activity, and your deduction management records — or whether it's working from Sales Cloud contact and opportunity data. Those are different data sets answering different questions.

What Benchmarks Actually Tell You — And What They Don't

Benchmarks are everywhere in AI coverage, and most of them are useless for understanding what these models will actually do with your AR data.

The Vals AI Finance Agent v1.1 leaderboard shows the top-scoring model — Claude Opus 4.6 Thinking — at 60.65% accuracy on realistic analyst-style tasks as of March 2026, with GPT 5.1 at 56.55% (the leaderboard is live and scores continue to shift).[26] Those are the frontier models. Mid-tier models sit well below both. The DualEntry benchmark, which tested GPT-5.4 on real accounting workflow tasks, found the best available model in March 2026 scored 77.3% — meaning even the top model failed roughly one in four complex accounting tasks.[27] The Scale Labs PRBench Finance leaderboard shows GPT-5.4 as a strong performer but not uniquely dominant; Claude Haiku 4.5 and Gemini 2.5 Flash are competitive at significantly lower cost for structured task types.[28]

What this means for O2C teams: No model available today should be positioned for autonomous handling of high-stakes, judgment-intensive decisions — complex dispute resolution with legal exposure, credit underwriting for major accounts, exceptions requiring interpretation of unusual contract terms. The correct deployment architecture is human-in-the-loop for those decisions, with AI handling the preparation, summarization, and draft work that reduces the human time required.

The models are genuinely impressive for classification, extraction, drafting, and summarization at volume. They are not reliably autonomous agents for consequential financial decisions. The teams that are getting the best results from AI in O2C understand this distinction clearly and build workflows that use each capability appropriately.

Where to Start: A Practical Entry Point for O2C Teams

If you're evaluating how to actually integrate the OpenAI stack into O2C operations, here's the sequencing that makes sense.

Start with ChatGPT Enterprise for your power users. The collections team, the credit analysts, the dispute coordinators who are doing the most document-heavy, communication-heavy work. Give them ChatGPT Enterprise with access to GPT-4.1 and the ability to upload documents. Have them use it for 30 days on real work — collections correspondence, credit memo drafting, dispute analysis, policy documentation. Measure time savings. This builds organizational fluency and surfaces the specific workflows worth automating.

Identify your three highest-volume, most repetitive workflows. For most O2C teams, these are some combination of: remittance extraction and cash application preparation, collections outreach drafting by aging bucket, and AR aging status reporting. These are your first automation candidates — and they are almost all GPT-4o mini or Gemini Flash territory, not GPT-5.

Evaluate your existing platforms first. Before building custom API workflows, check what the AI capabilities are in the O2C platforms you already have. HighRadius, Emagia, Gaviti, and Esker all have embedded AI capabilities, and many are already running on the same mid-tier models you'd integrate directly.[3] It may be faster to activate what's already licensed than to build net-new.

Build a Batch API workflow for one overnight process. Pick one reporting or classification task that currently runs manually and costs your team time every morning. Build it as a batch job using the OpenAI Batch API at the 50% discount rate. This creates a proof point that is financially measurable and demonstrates AI ROI in terms your finance partners will respond to.[8]

Use the Agents SDK for the workflow that spans multiple systems. The highest-leverage use case for custom AI workflow development in O2C is almost always the hand-off that currently requires a human to bridge two systems. The Agents SDK gives you the primitives to automate that bridge. Start with one.[20]

The Honest Summary

The OpenAI enterprise stack is real, it is enterprise-grade, and there are genuine use cases across O2C that it handles well right now. The security posture of ChatGPT Enterprise meets the bar for sensitive financial data. The mid-tier models are cost-effective for volume workflows. The Agents SDK provides a path to custom automation that doesn't require a dedicated engineering team.

The honest limitations: no model handles high-stakes autonomous decisions reliably. The Microsoft Dynamics deep integration story is still maturing — the Collections Agent was cancelled, not launched. The Salesforce integration needs custom configuration for AR use cases, not just activation.

The teams winning with AI in O2C right now are not the ones who picked the most impressive model. They are the ones who mapped their actual workflow problems to the right model at the right cost tier, built human-in-the-loop checkpoints into consequential decisions, and measured results with the same rigor they'd apply to any other process improvement.

That's the frame. Start there.

Part of The O2C Edge ongoing series — AI tools and strategies for Order-to-Cash professionals.

References

- Wired — GPT-5 launch: August 7, 2025

- OpenAI — Introducing GPT-5

- Stuut — Complete List of Order-to-Cash Automation Platforms 2026

- OpenAI — GPT-4o mini: advancing cost-efficient intelligence

- SSON — Future of O2C Market Report (2025)

- OpenAI — API Pricing (GPT-4.1, GPT-4.1-mini)

- Artificial Analysis — Gemini 2.5 Flash pricing and benchmarks

- OpenAI — API Pricing (Batch API 50% discount)

- AI News Grid — GPT-5.4 1M token context window

- Box — How OpenAI's GPT-5.2 delivers lightning-fast, specialist-level reasoning

- OpenAI — Enterprise Privacy & Security

- OpenAI — Trust Portal

- Christian & Timbers — ChatGPT reached 92% of Fortune 500 in 24 months

- OpenAI — 1 million businesses putting AI to work

- Reuters — OpenAI CFO: annualized revenue crosses $20B (Jan 2026)

- Reuters — OpenAI tops $25B annualized revenue (Mar 2026)

- OpenAI — Introducing Operator

- TechCrunch — OpenAI launches Operator

- TechCrunch — OpenAI upgrades Operator model (May 2025)

- GitHub — OpenAI Agents SDK

- OpenAI — Klarna case study

- PR Newswire — Klarna AI assistant primary source

- Microsoft Learn — Collections Agent cancellation (Feb 13, 2026)

- Microsoft Learn — Dynamics 365 Finance 2026 Wave 1 planned features

- OpenAI — Salesforce + OpenAI Agentforce integration

- Vals AI — Finance Agent v1.1 leaderboard (updated March 17, 2026)

- DualEntry — GPT-5.4 real accounting workflow benchmark

- Scale Labs — PRBench Finance leaderboard

- Salesforce — Spring '26 release; Agentforce Voice for Financial Services