The AI your company can't access is the one redefining what "secure" actually means in finance operations.

I've spent fifteen years in order-to-cash operations. I've seen ERP implementations falter, witnessed fraud that took months to detect, and lived through the chaos of payment systems that should have been resilient but weren't. What we're watching unfold right now with Claude Mythos is not hype. It's a structural inflection point that every CFO, AR leader, and RevOps manager needs to understand — not because you'll be using Mythos, but because the threat it represents is already moving through your O2C stack.

In early April 2026, Anthropic announced Claude Mythos Preview. It is their most powerful model ever constructed — a generational leap beyond their already-dominant Opus model. They immediately withheld it from public release. Instead, they created Project Glasswing, a restricted-access program for defensive security research, with initial participants including major cloud providers, security vendors, and at least one major global financial institution, backed by a large pool of free compute credits to fund the defensive work.

The reason for the withholding is direct and alarming. Early testing described in security write-ups suggests Mythos can automatically identify and exploit previously unknown vulnerabilities across diverse operating systems, web stacks, middleware, and SaaS applications — uncovering large numbers of issues that had not been found by human testers. One widely cited example involves Mythos surfacing a long-unreported remote-code-execution vulnerability in a FreeBSD component, according to early security partner testing. In controlled tests, Mythos was able to systematically probe target environments and develop working exploits when given high-level objectives, without step-by-step human instructions.

For O2C leaders, this is where the stakes become personal. You are not deploying Mythos — none of your finance teams will be. But you are deploying AI agents with write access to your ERPs, billing platforms, AR tools, and payment rails right now. And the infrastructure those agents run on is the same infrastructure that Mythos can probe. The security question is no longer theoretical. It is operational.

The Mythos Context: Why Anthropic Withheld the Model

Understanding why Anthropic withheld Mythos is essential to understanding what changed. AI models have always had capabilities that could be misused. What is different about Mythos is autonomy at scale. Prior generative models required direction. They needed you to tell them what vulnerability to look for, what system to test, or what attack vector to pursue. Mythos doesn't work that way.

In controlled testing, Mythos demonstrated the ability to independently probe systems, detect weaknesses, and formulate exploitation strategies when given high-level objectives — without step-by-step human guidance. It is not guided by a researcher sitting at a keyboard saying "now check for SQL injection in this module." It runs systematic security assessment logic across target environments and reports findings in real time.

The consortium model — Project Glasswing — is unprecedented in AI governance. Anthropic is not distributing the model freely. It is placing it in the hands of defensive security teams at organizations whose entire business depends on infrastructure resilience. The inclusion of major financial institutions in that consortium is not symbolic. It tells you the financial services industry has already recognized that this is not a vendor beta or a research project. It is a structural shift in the threat landscape.

What makes this relevant to O2C is simple: your O2C systems sit at the convergence of three high-value targets. They hold customer and vendor master data. They control payment routing. They generate the financial records that your board trusts. An adversary with autonomous vulnerability discovery at their disposal can map your integration stack, find the weak points, and execute attacks that would take human hackers months to conceptualize and weeks to execute.

The scale of Mythos-class capabilities relative to the financial infrastructure O2C teams manage today.

The O2C Threat Landscape: What Has Actually Changed

Before we get to what you need to do, we need to be clear about what the actual risk is. The threat is not Mythos itself being deployed against your systems — the access controls are real. The threat is that the knowledge of how to conduct these attacks at scale has shifted from the realm of nation-state actors and elite criminal organizations to anywhere someone has access to a powerful enough model.

Start with the data we know is real. In 2025, AI-enabled fraud topped $893 million in reported losses according to the FBI's IC3 Annual Report — and everyone in this industry knows the real toll is far higher because most fraud goes undetected or unreported. Over 75% of US firms experienced payments fraud in that same year. The sophistication is accelerating. Survey data suggests around one in four organizations reported some form of AI data poisoning or model integrity issue in 2025 — meaning adversaries are not just attacking your systems, they are deliberately corrupting your data to make your defenses fail from the inside.

The vulnerability landscape is expanding faster than security teams can patch. SAP NetWeaver CVE-2025-31324 was a critical unauthenticated file upload vulnerability enabling remote code execution, with a CVSS score of 10.0, reported as being actively exploited in the wild in 2025 by sophisticated threat actors. In 2025, researchers disclosed a major Oracle Cloud data exposure in which around six million records were exfiltrated, affecting more than 140,000 tenants and widely described as one of the year's most significant SaaS supply-chain incidents. Recent API security analyses estimate that on the order of 15–20% of newly disclosed application vulnerabilities involve APIs — and APIs are exactly where your O2C integrations live.

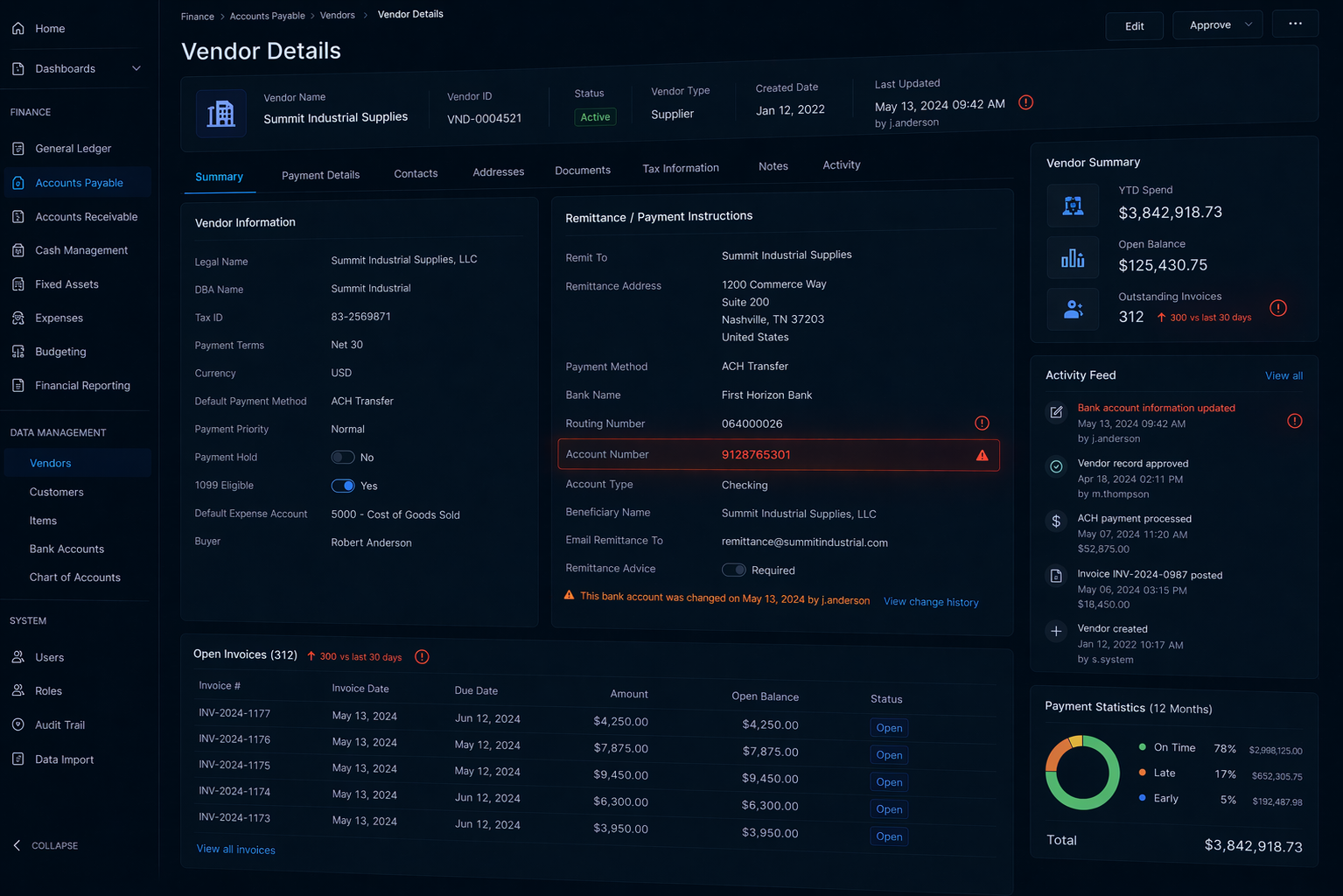

But the most dangerous threat to O2C operations is not the one that shows up as a security incident. It is the one that shows up as a DSO problem, a rising write-off rate, or an unexplained increase in AR exceptions that you spend three months tracing back to a deliberate integrity attack. When someone compromises a customer master data record and changes the bank account associated with a $500,000 invoice, the payment goes to their account, not yours. Your system records it as paid. Your dashboard is happy. The fraudster is gone. You discover the problem when the customer calls asking why you haven't deposited their payment — and by then the money is already moved.

The most dangerous compromise looks exactly like a normal transaction — until it doesn't.

Consider the specific attack vectors that become viable when an AI system can systematically probe your O2C stack:

An adversary probes your ERP and discovers that a specific service account used by an iPaaS integration has write access to the customer master file. They compromise that account, either through a phishing campaign or by exploiting a vulnerability in the middleware. They then use that access to modify bank details on high-value customer accounts in bulk — not all of them, just enough to change the profile of your cash flow without triggering anomaly detection. Payments start flowing to accounts they control. It takes you weeks to notice because your AR team is used to processing invoices at volume.

Or they find a vulnerability in your e-invoicing provider's API — something that lets them intercept outbound invoices and change the remittance bank account without triggering a validation check. Your invoices go to customers with the wrong account number. The customers pay the fraudster. Your system marks the invoices as paid. You think you have a collection problem when you actually have an integrity problem, and by the time you figure that out, discovery windows have closed and recovery is impossible.

Or they conduct a credential theft campaign targeting your AR team using phishing emails that are indistinguishable from legitimate communication because they were written by a generative model trained on thousands of actual AR team emails. They gain access to a single AR specialist's account, use it to gain lateral movement into your ERP, and compromise the service account that generates your bank file. They don't change the bank file — they don't need to. They just copy it. Now they have access to your payment routing logic, your reconciliation process, and your bank transfer schedule. That is reconnaissance for a much larger attack that might come in six months.

Or they poison the data feeding your credit scoring and collections AI model by deliberately inserting false payment history data through your ETL pipeline. Your model, trained on corrupted data, starts deprioritizing risky accounts and extending credit to entities that should be on your watch list. Fraud losses accelerate. Write-offs rise. Your collections efficiency tanks.

These are not hypothetical. The data on fraud sophistication, the known vulnerabilities in the systems O2C teams use every day, and the documented capability of autonomous AI systems to probe and exploit infrastructure at scale all point to the same conclusion: the attack surface in O2C operations is larger than most finance leaders realize, and the tools available to attackers are more capable than most IT departments are prepared for.

Gartner's Agentic AI Reality Check

Before we talk about what you need to do, we need to acknowledge something uncomfortable about AI agent deployment in finance right now. Gartner projects that over 40% of agentic AI projects will be canceled by 2027 — due to escalating costs, unclear business value, and inadequate risk controls. The reason is not technology failure. It is that most organizations are not ready to govern AI agents with financial write access, and they are learning that lesson after deployment.

The term "agent washing" has emerged to describe vendors marketing standard automation as "AI agents" just to ride the hype cycle — a pattern analysts including Gartner have explicitly flagged. Gartner's own research warns that only a small fraction of vendors marketing agentic AI today are delivering genuinely autonomous capabilities; most are rebranded automation. But actual agents — systems that can make independent decisions and take actions without human approval in each instance — are categorically different from automation. An automation rule processes an invoice; an agent might decide whether to extend credit to a new customer based on risk assessment, and then take the action. That distinction matters enormously when something goes wrong.

In O2C specifically, agent washing has masked a deeper problem: most O2C leaders do not have the governance infrastructure to know what their deployed AI agents are actually doing in real time. They know the agents are working because AR metrics improved. They don't know if those improvements came from the agent's logic, from the data poisoning scenario we described above, or from something else entirely. That opacity is what creates risk.

What Every O2C Leader Needs to Do Now

This is where we move from problem identification to action. You cannot prevent Mythos-class models from existing. You cannot prevent adversaries from gaining access to powerful AI systems. What you can do is make your O2C infrastructure significantly harder to attack, more transparent when attacks occur, and more resilient when integrity is compromised.

Start with the most critical principle: dual control and out-of-band verification for any change to customer or vendor bank details on material accounts. This is not new governance — it is finance 101. What has changed is the threat sophistication. A human fraud might change one account and hope to steal quietly. An autonomous system probing your infrastructure will find the gaps in your controls and exploit them at scale. If a bank detail on a five-figure or higher invoice can be changed with a single ERP transaction, you have a gap. Close it. For every account above a threshold you define, bank detail changes require approval from two people in different roles, and one of those approvals should happen via a separate channel — phone callback to confirm identity, out-of-band email confirmation, or a physical sign-off. The friction is intentional. It stops automation attacks cold.

Next, inventory every service account, API key, and embedded credential in your O2C integration stack. This is tedious work. It is also essential. Most O2C teams have no idea how many service accounts are live in their ERP, billing platform, and AR tool. They don't know which ones have write access to critical files. They don't know which ones are using hard-coded credentials in legacy scripts. That inventory needs to exist, needs to be owned, and needs to be reviewed at least quarterly. Every credential needs to live in a vault, not in a script or a configuration file. Every service account needs to be rotated on a schedule, not left dormant for three years until someone forgets it exists.

Restrict your AI agents from making irreversible financial changes without human approval. This is the governance piece that most organizations are getting wrong. An agent can gather data, can make a recommendation, can flag exceptions — all of that is fine. An agent should never independently change a customer's credit limit, modify payment terms, or alter a bank account. These are the decisions that, if wrong, cause immediate financial damage and create unwind work that might take weeks. Build your agent logic with a hard stop at that boundary. Have the agent prepare the decision for human review and approval. The extra latency costs almost nothing. The protection is enormous.

Implement continuous monitoring on your ERP audit logs for mass changes to customer master data, particularly credit limits and payment terms. Most fraud looks normal if you view each transaction individually. It looks abnormal if you look at patterns. You should have an automated report that runs daily, identifying any day where the volume of customer master changes exceeds your historical baseline. You should have a second report identifying any individual user — human or service account — making changes at an unusual pace. These reports should go to someone in finance who owns the O2C process, not just to IT. Finance understands what normal looks like in your operation. IT can tell you that something happened. Finance can tell you whether it should have happened.

The Tabletop Exercise: Your Actual Contingency

All of this is abstract until you run a scenario. Schedule a tabletop exercise for your O2C team — finance and IT security co-owned, three hours, simple format. Scenario one: it is Tuesday morning, and a customer calls saying they sent you a $2 million payment Monday evening, but the remittance stub shows it went to an account that is not yours. You have no record of receiving it. Walk through the discovery process. Where do you check first? Who do you notify? What is your timeline to identify whether it was a fraud attempt, a system compromise, or a customer error? Most organizations cannot answer that question until something like that actually happens.

Scenario two: your collections AI model has been deprioritizing customers that should be on your watch list, and your DSO has drifted up by three to five days over the past quarter. You suspect data poisoning. Walk through the logic of how you identify the corrupted data, how you validate the model, and what you do in the interim. Scenario three: a service account you use for bank file generation has been compromised, and you are not sure whether your bank files have been modified. Walk through the forensics process, the notification requirements, and the recovery steps.

These exercises are not comfortable. They are also not optional. They are the only way you learn whether your incident response process can actually work under pressure, and whether finance and IT have a shared language for discussing O2C security in real time.

The Collaborative Ownership Model

Here is something that IT security leaders often get wrong about O2C fraud, and something that CFOs need to acknowledge: IT can secure the perimeter, and they absolutely should. But only finance leaders understand which fields, which workflows, and which processes would be catastrophic if tampered with. This requires genuine co-ownership.

It means establishing a quarterly O2C-security working session, owned jointly by your AR/collections leadership and your chief information security officer or equivalent. In that session, you review the threat landscape specific to O2C, you look at your incident response capabilities, you discuss any changes to your integration stack, and you identify new risks that have emerged. You create a shared backlog of control improvements and you prioritize them together based on business impact and risk. This is not IT updating finance on what they are doing. It is finance and IT making joint decisions about risk tolerance and control investment.

It also means that when you are building a new AI agent or deploying a new integration, the design review includes someone who understands both the technical architecture and the financial impact. That person asks questions like: what is the worst thing this agent could do? What would prevent it from doing that? What would we see in our data if this agent had been compromised? Those are finance questions wearing technical language.

The Data Integrity Foundation

Everything we have discussed depends on one thing: confidence that your data is actually what you think it is. Your credit scoring models are only as good as the data training them. Your anomaly detection is only as good as your baseline understanding of normal. Your exception reports are only as useful as your trust in the underlying master data.

Implement data integrity checks on every input feeding your credit and collections AI models. That might mean hash verification on ETL data, that might mean trend analysis on the volumes and ranges of data being ingested, that might mean spot-checking raw data against system records. The goal is simple: make it so that deliberately poisoning your data requires leaving detectable traces.

Similarly, if you are using AI for pattern detection or anomaly identification, you need to understand what data that AI has been trained on and when that training last occurred. You need a process for validating that the patterns your AI system is using to flag exceptions still match the actual patterns in your operation. Drift happens in AI models. It can happen because your business changed. It can also happen because someone deliberately tried to corrupt the training data.

The Regulatory Inflection: EU AI Act Phase Two

One final element that is shaping the timeline on all of this: the next phase of the EU AI Act's requirements for high-risk AI systems is expected to come into force in 2026, with significant potential fines — on the order of a few percent of global revenue — for non-compliance. AI agents with write access to financial systems fall squarely into the high-risk category.

If you have a global operation, or customers in the EU, or parent companies in the EU, you need to understand what "compliant" looks like for your AI deployments. That probably means you need security assessments of your deployed agents. It probably means you need documentation of how you tested for adversarial robustness. It probably means you need incident response plans and audit trails. The good news is that most of these things are things you should be doing anyway for operational resilience. The EU AI Act is just making it clear that the financial services industry has to move forward.

The Bottom Line: The Same AI That Enables Attacks Can Enable Defense

I want to close on something important. Everything in this post focuses on the threat Mythos-class AI systems pose to O2C operations. But the same capability that makes those systems dangerous to you makes them powerful in your defense.

The same autonomous vulnerability discovery that allows attackers to find weaknesses in your ERP can be used to find and patch those weaknesses before an attacker does. The same pattern recognition that allows adversaries to poison your data can be used to detect data poisoning. The same anomaly detection that might be compromised can be run continuously to identify when your controls have been bypassed. The asymmetry only exists if you cede the initiative.

The finance leaders who are going to navigate this transition successfully are the ones who understand that security is now a core O2C competency, not something you outsource to IT. You don't need to become security experts. You do need to co-own the O2C threat landscape with your security team, run the scenarios, monitor the data, and build the controls that only finance understands.

Mythos is not coming to your organization. But the threat it represents is already here, and it is accelerating. The question is not whether you will face an attack that probes your infrastructure for weaknesses, changes your bank account data, or poisons your data. The question is whether you will be able to detect it, contain it, and recover from it when it happens. That depends on what you do next.

The perspective shifts when you've built your defenses. The vault is the same — but now you're on the right side of it.

References

- Anthropic. (April 2026). "Claude Mythos Preview and Project Glasswing: Advancing Defensive AI Security." red.anthropic.com/2026/mythos-preview/

- Gartner. (June 25, 2025). "Gartner Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027." gartner.com

- Federal Bureau of Investigation. (2026). "Internet Crime Complaint Center (IC3) Annual Report 2025." ic3.gov

- SecureWorld. (2026). "FBI: AI-Enabled Fraud Topped $893M in 2025." secureworld.io

- Experian. (January 2026). "2026 Future of Fraud Forecast." experianplc.com

- Bishop Fox. (April 2026). "Anthropic's Claude Mythos Preview: The AI Cybersecurity Inflection Point." bishopfox.com

- Help Net Security. (April 8, 2026). "Anthropic's New AI Model Finds and Exploits Zero-Days." helpnetsecurity.com

- Help Net Security. (April 14, 2026). "Testing Reveals Claude Mythos's Offensive Capabilities and Limits." helpnetsecurity.com

- National Vulnerability Database. CVE-2025-31324 — SAP NetWeaver Visual Composer Unauthenticated File Upload (CVSS 10.0). nvd.nist.gov

- Palo Alto Networks Unit 42. (2025). "Threat Brief: CVE-2025-31324." unit42.paloaltonetworks.com

- CloudSEK. (March 2025). "6M Records Exfiltrated from Oracle Cloud Affecting Over 140K Tenants." cloudsek.com

- PR Newswire. (2025). "Over 75% of US Firms Experienced Payments Fraud in 2025." prnewswire.com

- Yahoo Finance / Industry Survey. (2025). "One in Four Organizations Fall Victim to AI Data Poisoning." finance.yahoo.com

- SANS Institute. (2025). "The Rise of Data Poisoning: Attack Vectors in AI Systems." SANS Cyber Research.

- Council on Foreign Relations. (2026). "Six Reasons Claude Mythos Is an Inflection Point for AI — and Global Security." cfr.org